HEQCO’s skills agenda shows a lack of rigour and scientific integrity

The way HEQCO chose to communicate the results of its recent Skills Assessment Pilot Studies is a perfect example of cargo cult policy research.

Richard Feynman famously coined the metaphor “cargo cult science.” This refers to practices that display all the trappings of scientific activity, but fail in some fundamental way to follow the scientific method. Originally, cargo cults were belief systems developed in the South Pacific Islands after the Second World War; Indigenous peoples who had witnessed the landing of aircraft carrying valuable cargo came to believe that if they reproduced the landing strips and the behaviour of air traffic controllers using makeshift materials, airplanes would land again. Dr. Feynman argued that cargo cults developed both in obvious pseudoscientific endeavours and among otherwise respectable, professional scientists. Through various examples, he pointed to biases, a lack of rigour and scientific integrity as sources of cargo cultism within scientific activity.

Dr. Feynman’s wit came to mind last December, when the Higher Education Quality Council of Ontario (HEQCO) released the results of its Skills Assessment Pilot Studies. The agency – which has played a positive role over the years in funding, conducting and disseminating research to inform policy – started its Essential Adult Skills Initiative (EASI) in 2016, with the goal of measuring college and university students’ employment-related skills to inform quality improvement in higher education. Two pilot studies were released; the first was conducted by the HEQCO team, and looked at the assessment of “essential skills” among students at 20 institutions. The second was run by the Education Policy Research Initiative at the University of Ottawa, comparing the acquisition of critical thinking skills of entering and graduating classes at two institutions.

The first study, billed from the beginning as a trial for what “could become standard practice in Ontario and beyond” – and the way HECQO has communicated its results – provides a good example of cargo cult policy research.

The study uses the OECD’s Education and Skills Online (ESO) assessment to test college and university students’ numeracy, literacy and problem-solving skills. The basic idea was to test first- and fourth-year students pursuing college diplomas and university degrees to see what differences might exist in their scores. A longitudinal design would have been more ideal, as you would be testing the same students over time and gauging how they do after moving through their programs. But for a pilot, it is perfectly understandable to set it up this way.

However, HEQCO’s application of this tool involves a very particular interpretation of the results; the team claims that achievement of Level 3 (on a scale from 1 to 5) represents the “minimum required proficiency level for Ontario’s higher education graduates.” As explained elsewhere, this claim is unsubstantiated according to no others than the test designer and a project lead at the OECD.

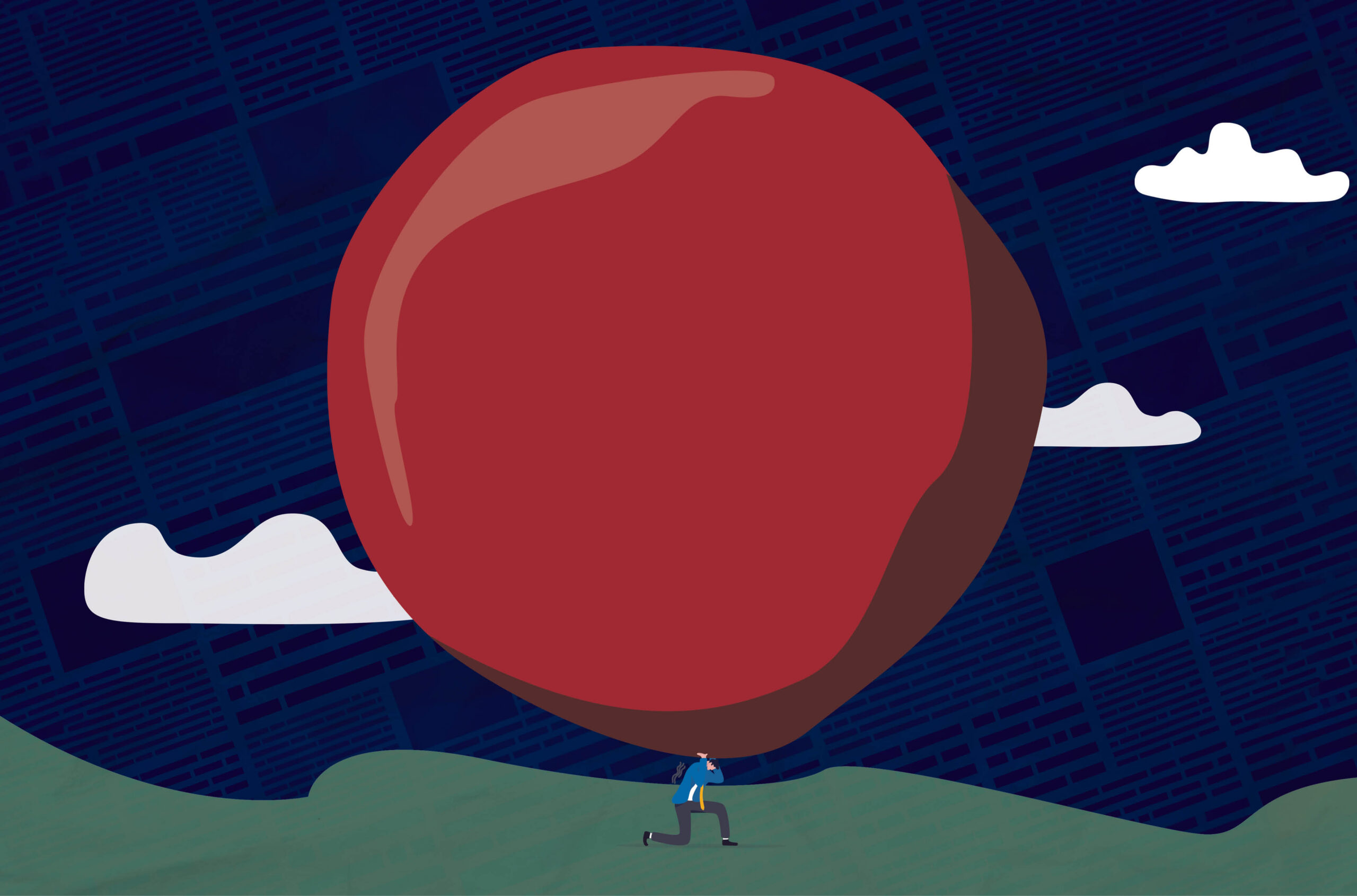

As much as people may want to see a postsecondary version of a school report card from this test – picture cargo cult believers looking at a slab of wood as if it were a radar – that is not what the test was designed for, nor can its results be used in this way.

The trial was designed in a very flexible way, as HEQCO’s priority was explicitly to make it as easy as possible for partners to participate. How were the first- and fourth-year students recruited? From which programs? How would they take the test? The answer, in one word, is “whatever.” As long as the partner institutions could get a large number of students to participate, they could pretty much do as they pleased. They chose which programs to draw students from, and used whatever recruitment strategy they regarded as appropriate. The methodology clearly states that the goal of the study was “achieving strong response rates rather than representativeness” (p.17-18).

Hence, the test was administered without following any of the precepts of survey research that would allow you to arrive at valid and generalizable results. Once you lay things out this way, you could justify this trial on organizational grounds: could we all work together to implement this tool? What would that look like in practice? What lessons might that raise for future studies? This is why firefighters do fire drills and armies do war games; they want to see if the processes work the way they are supposed to, but they do not purport to be putting out real fires or winning real wars.

But that is not how HEQCO framed and presented this pilot to the public. Instead, the lede of HEQCO’s press release was “One-quarter of graduating students score below adequate on measures of literacy, numeracy”, which was later picked up as the headline of a Globe & Mail article. A HEQCO blog post by the agency president and study co-author Harvey Weingarten stated, “too few students are graduating with superior literacy and numeracy skills and too many – about one in four – are graduating with literacy and numeracy levels that are inadequate to compete successfully in today’s labour market.”

Further, HEQCO claims that this puzzling study design provides evidence that – hold your breath – this project works! Hence, it calls for the implementation of this form of assessment across all institutions.

Dr. Feynman contrasted science to advertisement in making his argument. He recalled an advertisement that claimed “Wesson Oil doesn’t soak through food”, which is a true statement – but failed to disclose that “no oils soak through food, if operated at a certain temperature”, and that “at another temperature, they all will.” The implication of the ad slogan is obviously different, and more important for the advertisers, than the true facts.

Unlike cargo cult followers, the joke of advertising is not on those communicating it, but on us.

Featured Jobs

- Canada Research Chair, Tier 2 in Social Innovation and Entrepreneurship and Director, Maria T. Schneider Social Innovation InstituteMacEwan University

- Health Sciences - (2) Postdoctoral Research Fellowships, 2-Year Term (Rare Dementia Support Canada)Nipissing University

- Physical Education - Probationary Tenure-Track PositionBrandon University

- Engineering - Assistant Teaching Professor (Electrical, Electronics and Communications Engineering)Ontario Tech University

- Veterinary Medicine - Lecturer, Term (Large Animal Internal Medicine)University of Saskatchewan

Post a comment

University Affairs moderates all comments according to the following guidelines. If approved, comments generally appear within one business day. We may republish particularly insightful remarks in our print edition or elsewhere.

5 Comments

Remember the first job of HEQCO is to justify the continued existence and money coming to HEQCO… Promoting things that require their existence or create continuous licensing fees to HEQCO whether scientifically or empirically valid is just part of continuing their existence. They totally missed out on the huge consulting fees for university budgeting transformations; they probably want to try to create a new crisis in universities so they can profit. They do seem to create or ‘discover’ quite a few crises.

I don’t think it is fair to claim that Heqco is so single mindedly motivated by self interest. Weingarten’s starting point was reports of employers’ complaints that graduates had inadequate literacy, numeracy and problem solving skills. He argued that a universal test of these skills in graduates would assure employers that postsecondary institutions were meeting employers’ needs, and motivate institutions to meet employers’ needs better.

So I think Heqco really believes its own snake oil, just as it (used to?) really believe that all would be solved by focussing on learning outcomes.

This is an interesting debate! Professor Gavin Moodie’s points are even more interesting. Both of you have long experience in education. I have full respects for both. I do not also want to undermine Professor Harvey Weingarten’s efforts; however, the statement that Professor Weingarten made and Professor Creso Sa quoted in this article “…too few students are graduating with superior literacy and numeracy skills and too many — about one in four — are graduating with literacy and numeracy levels that are inadequate to compete successfully in today’s labour market” (I myself checked HEQCO’s blog post). If we do not ask the “validity” question, i.e. checking if the right kind of tools were used to conclude the study, a commentator would share the same support as Gavin did. May I ask, someone who really knows about test validity, if HEQCO has really used the right kind of tools? I would say NO; but, I would like to know more.

Industries and employers, all over the world, pass the same blame on academic institutions. Lot of the time they do that as their employees are not able to respond to the need of their production line in a similar way a machine does–employers forget or ignore the difference between man and machine! Because they need someone to be blamed. I am sorry if I have made overly generalized it…

Great piece!

So important for us to call out this nonsense before it goes any further.

I similarly focused on the other test looking at critical thinking in the February Bulletin

https://www.caut.ca/bulletin/2019/02/commentary-misguided-standardized-testing-comes-ontario-higher-education

The point is to increase the power and the money that flows to HEQCO; then privatize the agency; then charge high fees for assessment and increase the salary of the CEO by 500%; this allows private capital to extract more surplus from the sector without delivering any benefits. S UK got similar system;