Retraction Watch is a blog that reports on retractions of scientific papers, offering a window into the scientific process. Much of its content deals with the seamier side of academia, exposing the lying, stealing and fraudulent behaviour that exists behind facades of respectability. The blog was launched in 2010 by two journalists with backgrounds in medical reporting: Ivan Oransky, a former editor of several scientific publications who now teaches medical journalism at New York University’s Carter Journalism Institute, and Adam Marcus, a freelance writer and managing editor of Gastroenterology and Endoscopy News. It is a non-profit endeavour supported by grants, and neither Dr. Oransky or Mr. Marcus take a salary from overseeing the blog. The website, which draws 150,000 views per month and has garnered sterling reviews, broke a number of stories that went on to become international news. The two recently spoke with writer Kerry Banks for University Affairs about their experience.

University Affairs: What prompted you to start your blog?

Ivan Oransky: We decided to launch Retraction Watch in the wake of a scandal involving an American anesthesiologist named Scott Reuben, who had fabricated data in more than 20 papers. Adam broke that story and Reuben ended up going to federal prison. We felt that retractions were not only interesting from the standpoint of journalists, but that they could offer a glimpse into how science works – and sometimes doesn’t. I said to Adam, “Why don’t we start a blog about this?” and he said, “Sure, great.” We honestly didn’t think at the time that there would be all that much to write about. It turned out to be a far bigger story than we had initially envisioned.

UA: Why was that?

Adam Marcus: We soon learned that journals were not doing an effective job of announcing retractions and dealing with retractions, and that they often obfuscated their findings and made it difficult for readers of that material to know what was really going on. So we realized we had an opportunity to bring some sunlight to an important area that was being kept in darkness.

UA: Did the number of retractions you were encountering surprise you?

Ivan Oransky: At first we were surprised, but today very little surprises us.

UA: Can you put the rise in retractions in context?

Adam Marcus: There was a tenfold increase in retractions from 2004 to 2012, while the number of published papers only rose by 40 percent.

UA: Why do you think the number of retractions has been increasing in recent years?

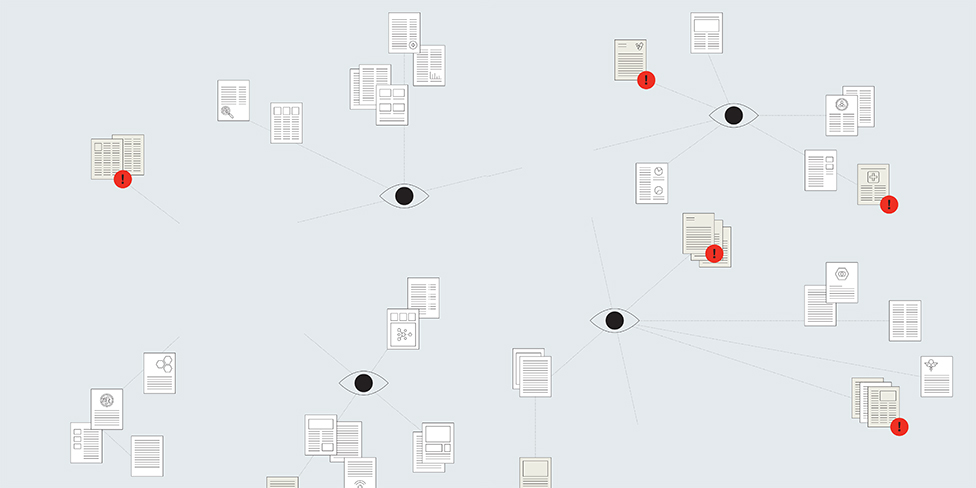

Ivan Oransky: It’s pretty clear that we have more retractions today because more people are looking [for them]. Papers are online now and that wasn’t true 20 years ago. There is advanced detection software. There are a million eyeballs on all these papers now. But there is also evidence that misconduct is on the rise. A couple of years ago, Elisabeth Bik, a microbiologist at Stanford, and her colleagues conducted a massive study looking for image duplication and manipulation in 26,000 published papers. Bik found inappropriate image manipulation in about one in 25 papers. This was a doubling from what had been found in 2000.

UA: What percentage of retractions can be attributed to unscrupulous scientific methods?

Ivan Oransky: About two-thirds of the time retractions are due to misconduct. We see things like image manipulation, falsifications, fake data and plagiarism.

UA: What happens to a paper once it has been retracted?

Adam Marcus: A retracted article is not supposed to be vaporized from the universe. It is supposed to exist with a retracted watermark on it so that people can see what happened with the study. Often journals don’t do the watermarking or sometimes they even make them disappear. But, generally, it exists as a retracted article. However, these papers still get cited. Some receive hundreds of citations even after they have been retracted.

UA: Where can you look to see if a paper has been retracted?

Adam Marcus: There are a few sites you can use to check for retractions such as PubMed, but our site has the most comprehensive collection. We have more than 17,000 retractions in our database. That is thousands more than our competitors.

Ivan Oransky: A good chunk of the time it’s impossible to tell if a paper has been retracted – the journal doesn’t say that, the publisher doesn’t say that, the index doesn’t say that. People don’t go out of their way to publish retractions.

UA: Why aren’t journals more proactive about publishing and explaining the reasons for retractions?

Ivan Oransky: The editors of journals blame lawyers. They say if they accuse someone of misconduct then that person will hire a lawyer and the lawyer will make a lot of noise and threaten to sue. I happen to think that journals should stand up to the threat and not be bullied, but you can see how they end up making decisions like that. There is a thriving legal practice today in defending people against allegations of misconduct.

UA: How do you decide if you want to cover a retraction on your blog?

Ivan Oransky: We look at the notice and see if there is a story there. We may file public records requests. If there has been an investigation, we’ll try to get a report of that investigation. We also look at the notice and see if it reflects reality.

UA: What factors might motivate a scientist to commit misconduct?

Ivan Oransky: Publish or perish is a real thing. And if you know that how you’re going to be judged depends on you being published in high-impact journals, then you are going to do what it takes to get published in those journals. For most people that may mean working harder, trying to be smarter about things. For a small percentage of people that means cutting corners, and for an even smaller percentage of people that means cutting corners in a way that is considered misconduct.

UA: Is it true that high-impact journals have more retractions?

Adam Marcus: There is some data to support this. You can imagine that might be the case because there are more eyes on those papers. By the same token, people who are desperate to advance their careers might shoot for the moon and try to publish a fraudulent or shady study in a high-impact journal because it has the best chance of benefitting their careers.

UA: What kind of payoff is there for getting a paper published in a prestigious publication?

Adam Marcus: You can turn a single paper in a prestigious journal like Science or The New England Journal of Medicine into a job offer. In China, the stakes are incredibly high. Scientists can win big cash bonuses and jobs for being published in high-profile Western journals. And that money is coming from the Chinese government, which is pushing very strongly to raise the prestige of Chinese science. Overall, the rewards for being published in influential journals have never been higher.

UA: What other abuses are occurring?

Ivan Oransky: Some scientists use fake email addresses to give glowing reviews to their own papers. We broke that story in August 2012. We’ve catalogued all the retractions made because of fake peer reviews. There are now more than 630 in our database.

UA: What blame do universities share in this?

Adam Marcus: Our feeling is that universities are conflicted. They have an incentive not to come clean about the misbehaviour of their faculty because of the hit it may deliver to their public image. I think it’s a problem. I believe that the public has an absolute right to know what universities are doing with their money.

Ivan Oransky: Universities are tasked in many countries with investigating their own and that’s a recipe for conflict of interest. Universities that depend upon soft-money funding are investigating people who are committing fraud using soft-money funding, so why are we surprised when they consistently downplay or even cover up cases of misconduct?

UA: Some of the cases that result in retractions must have the potential for serious consequences for people’s health. Can you offer an example of this?

Adam Marcus: Joachim Boldt, an anesthesiologist in Germany, who amassed more than 90 retractions, did a lot of work on volume expanders, which are things you give patients so that their blood pressure doesn’t crash when they are critically ill or during surgery. Boldt fabricated his data to make a commercially available product look better than it may actually be. And his papers were highly cited.

Ivan Oransky: We recently had the case of a cancer researcher at the University of Texas who swapped her own blood samples for those that were supposedly taken from 98 human subjects. She called it an “inadvertent mistake.” I went to medical school and I was always sure when I was taking blood from someone else. How is that an inadvertent error?

UA: Do you have any suggestions as to how we might reduce the incidence of misconduct in scientific research?

Adam Marcus: I don’t think you can do much to stop misconduct from occurring. Retractions happen, and when they do, we should push for greater transparency and better reporting about why. The quicker that journals respond to retractions, the better off science will be.

Ivan Oransky: We have to create incentives that aren’t all about publishing papers in high-impact journals. We need to create incentives that reward the kind of behaviour we really want to see in science. We want data sharing. We want open science. We want honest science. Let’s incentivize all of that.

This interview has been condensed and edited for clarity.